Tout savoir sur la chasse au fusil à pompe !

En matière de fusils de chasse et à pompe sont de plus en plus sélectionnés par les chasseurs. Mais quels sont les avantages de cette

Un annuaire pour la chasse : le must pour les acteurs dans ce domaine Êtes-vous une personne qui travaille dans le domaine de la chasse ? Avez-vous un site sur la chasse que vous voudriez faire connaitre ? Nous avons la solution : optez pour un annuaire sur la chasse ou plus précisément l’annuaire « la communauté de la chasse ». Vous gagnerez beaucoup en y inscrivant votre site, comme le prouve ce qui suit. Qu’est ce qu’un annuaire En gros, l’annuaire en ligne c’est comme l’annuaire en version papier. Vous avez une catégorie pour les activités, une catégorie pour la localisation et bien entendu pour le contact. Vous pouvez y trouver tout ce dont vous avez besoin et dans notre cas, les sites pour la chasse. En bref, c’est un moyen de faire connaitre un site et ainsi avoir des bénéfices non-négligeables comme faire des ventes et fidéliser des clients en ligne.

AJOUTER SON SITE SUR L’ANNUAIRE.

Dans ce Wiki munition nous présentons les différentes cartouches pour la chasse. Pas de balistique, pas de grandes théories, le but est de vulgariser ce thème pour les débutants. En effet, beaucoup de jeunes chasseurs se posent des questions sur les munitions : « quelle est la différence entre la 7×64, la 7,62 et la 7 65 ? » … Il y a beaucoup de différences entre ces calibres. Les calibres les plus utilisés en chasse tel les 222 REM, 270 WIN, 280 REM sont abordés mais aussi des cartouches plus spécifiques. Nous expliquons aussi pourquoi a été conçu ce calibre Conçu pour le chasseur en battue. Convient au traqueur etc… Nous ajoutons une liste non exhaustive des armes chambrés dans ce calibre. Les munitions des différents manufacturiers (Federal, Norma, Sauvestre, Tunet, Remington etc) qui ont dans leurs catalogues des cartouches dans le calibre présenté. Le but est d’être efficace quand on pratique notre loisir préféré. Mais surtout savoir quelle cartouche acheter une fois arrivé chez l’armurier.

Comme beaucoup de personnes, nous sommes sûrs que vous vous demandez si entrer votre site dans l’annuaire est une bonne idée. Sachez que notre annuaire et l’annuaire en générale offre beaucoup d’avantages.

Premièrement, inscrire votre site sur les annuaires c’est un plus pour avoir de la visibilité. En effet, lorsque vous vous inscrivez sur un annuaire, vous prouvez officiellement l’existence de votre site à vos clients et bien évidement l’existence des produits pour la chasse que vous vendez ou que vous louez. En plus de cela, notre annuaire permet à vos clients de laisser des commentaires, ce qui développera encore plus une relation de confiance entre vous et vos clients.

Deuxièmement, l’annuaire augmentera de façon significative la notoriété de votre site. Notre annuaire vous donne la possibilité d’avoir plus de notoriété et de faire encore plus de vente. Vos clients potentiels par l’intermédiaire de l’annuaire verront votre site et ils n’auront plus besoin de se déplacer dans un magasin pour trouver ce qu’ils cherchent.

Troisièmement et d’ailleurs, l’avantage le plus important que vous aurez si vous vous inscrivez sur notre annuaire : c’est un bon moyen de référencement. Lorsque vous créez un site, vous avez besoin que votre site soit en première page des moteurs de recherche. La raison ? Environ 60% des internautes ne regardent même pas la deuxième page. De ce fait, votre site doit impérativement être à la première page pour que vous ayez des clients. C’est justement sur ce point que l’annuaire entre en jeu, puisque c’est aussi un des moyens de référencement. Lorsque votre site de chasse est dans notre annuaire, il est indexé et ce sera plus facile pour les moteurs de recherche de le trouver lorsqu’un internaute fera des recherches.

En résumé, puisque vous avez beaucoup d’avantages à inscrire votre site dans les annuaires. Nous vous invitons et d’ailleurs nous vous recommandons de vous inscrire sur notre annuaire. Notre annuaire vous permettra d’avoir de la visibilité et par conséquent des clients. Par ailleurs, il est très facile de vous inscrire sur la communauté de la chasse. Vous pouvez y inscrire votre site spécialisé dans la chasse en quelques minutes et ainsi gagner de la visibilité.

Vous aimez chasser ? S’inscrire au forum Parlons Chasse est l’occasion de connaître des gens qui partagent cette même passion. Au moindre sujet qui vous titille, vous pouvez tout de suite vous adresser aux membres, qui seront ravis d’échanger avec vous. En effet, notre forum regroupe des chasseurs professionnels, des vendeurs et loueurs de matériel de chasse ainsi que d’autres acteurs. Être dans le forum vous permet également d’avoir des réponses rapides, variées et soutenues concernant la chasse. Une opportunité d’apprendre de nouvelles techniques de chasse, d’avoir des conseils avisés sur les munitions, et de recevoir plusieurs recommandations. Le petit avantage aussi, c’est que vous pouvez garder votre anonymat lors des échanges. Vous pourrez ainsi trouver des camaraderies à proximité. Les membres discutent des actualités sur la chasse en France et dans le monde entier, des armes et munitions ainsi que de chiens de chasse, ou encore des formations autour des métiers de la chasse

En matière de fusils de chasse et à pompe sont de plus en plus sélectionnés par les chasseurs. Mais quels sont les avantages de cette

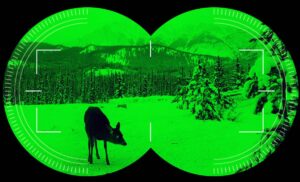

Table des matières Les jumelles vision nocturne ne sont pas seulement un outil; elles représentent une véritable révolution dans l’univers de la chasse. Alors que

Table des matières À la découverte des origines Le Braque Slovaque, ou Slovenský Hrubosrstý Stavač en slovaque, est une race qui, comme son nom l’indique,

Table des matières Lorsqu’on évoque le Griffon Korthals, on parle d’une race qui a su, au fil des siècles, prouver sa valeur indéniable tant dans

Si vous voulez vous lancer dans le business dans la chasse, par exemple ouvrir un site de vente ou une compagnie, le forum vous serait très utile. Vous pouvez être informé des tendances chasse, trouver ce que les gens ont le plus besoin pour chasser. Mais aussi, une opportunité de définir les produits qui se vendent le plus ou ceux qui ont reçu le plus de retours négatifs.

Soyez un animateur de discussion en lançant un débat qui vous intéresse. Plusieurs points de vue seront abordés. Ce qui vous serait d’un grand atout dans votre plan d’affaires.

Participer à des discussions dans un forum est l’un des moyens les plus convoités pour améliorer son e-réputation. En effet, si vous avez une entreprise liée à la chasse, vous pouvez trouver des clients potentiels dans un forum.

Il faut impérativement marquer votre présence en posant des questions et en participant aux échanges entre membres. Grâce à votre signature et un lien vers votre site, vous pouvez atteindre plus de prospects. D’ailleurs, de nombreux internautes cherchent des produits dans un forum.

Un forum est aussi un canal pour élargir vos relations. Votre chance d’y trouver des partenaires potentiels dans votre région est large. Souvent, il arrive que les conversations aboutissent à un échange de contact.

Ce n’est pas tout, participer à ce forum Parlons Chasse vous donnera plus d’idées sur ce que pensent vos clients. Un moyen ainsi pour vous de récolter les avis positifs et les points à améliorer. Comme la plupart des membres préfèrent l’anonymat, ils se sentent plus libres de donner du feedback.